When two people interact, their brain activity is synchronized, but until now it was unclear to what extent this “brain-to-brain coupling” is due to linguistic information or other factors, such as body language or tone of voice. The researchers report on August 2 in the journal Neuron that brain-to-brain coupling during conversation can be shaped by taking into account the words used during that conversation and the context in which they are used.

We can see linguistic content emerge word by word in the speaker’s brain before they actually articulate what they are trying to say, and the same linguistic content quickly reappears in the listener’s brain after hearing it.’

Zaid Zada (@zaidzada), first author and Princeton University neuroscientist

To communicate verbally, we need to agree on the definitions of different words, but these definitions can change depending on the context. For example, without context, it would be impossible to know whether the word “cold” refers to a temperature, a personality trait, or a respiratory infection.

“The contextual meaning of words as they appear in a particular sentence or in a particular conversation is very important to how we understand each other,” says neuroscientist and co-senior author Samuel Nastase (@samnastase) of Princeton University. “We wanted to test the importance of context in aligning brain activity between speaker and listener to try to quantify what is shared between brains during conversation.”

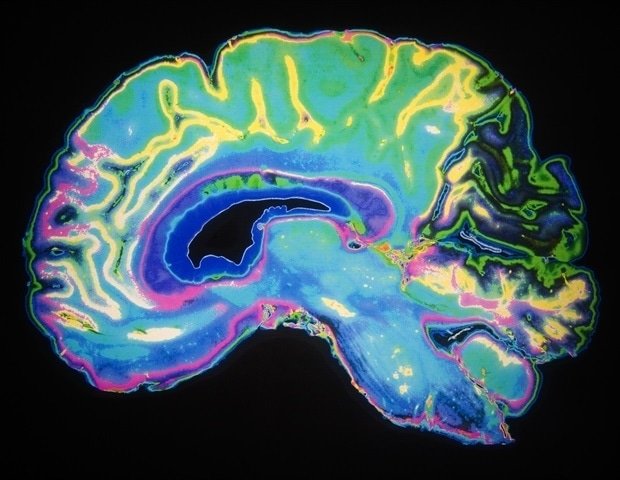

To examine the role of context in driving brain coupling, the team collected brain activity data and conversation transcripts from pairs of epilepsy patients during natural conversations. Patients underwent intracranial monitoring using electrocorticography for unrelated clinical purposes at the New York University School of Medicine Comprehensive Epilepsy Center. Compared to less invasive methods such as fMRI, electrocorticography records brain activity at extremely high resolution because electrodes are placed in direct contact with the surface of the brain.

The researchers then used the GPT-2 large language model to extract the context surrounding each of the words used in the conversations, and then used this information to train a model to predict how brain activity changes as the information flows from speaker to listener during conversation. .

Using the model, the researchers were able to observe the brain activity associated with the specific meaning of the words in the brains of both the speaker and the listener. They showed that word-specific brain activity peaked in the speaker’s brain about 250 ms before he said each word, and corresponding peaks in brain activity associated with the same words appeared in the listener’s brain about 250 ms after they heard them.

Compared to previous work on speaker-listener brain coupling, the group context-based approach model was better able to predict common patterns in brain activity.

“This shows how important context is, because it better explains the brain data,” Zada says. “Large language models take all these different elements of linguistics, such as syntax and semantics, and represent them in a single high-dimensional vector. We show that this type of unified model is capable of outperforming other hand-drawn models from linguistics.”

In the future, the researchers plan to extend their study by applying the model to other types of brain activity data, for example fMRI data, to investigate how parts of the brain not accessible by electrocorticography work during conversations.

“There is a lot of exciting future work to be done looking at how different brain regions coordinate with each other on different time scales and with different kinds of content,” says Nastase.

This research was supported by the National Institutes of Health.

Source:

Journal Reference:

Zada, Z., et al. (2024) A model-based common language space for brain-to-brain transmission of our thoughts in natural conversations. Neuron. doi.org/10.1016/j.neuron.2024.06.025.